Severin Borenstein at the Energy Institute at Haas has taken another shot at solar rooftop net energy metering (NEM). He has been a continual critic of California’s energy decentralization policies such as those on distribution energy resources (DER) and community choice aggregators (CCAs). And his viewpoints have been influential at the California Public Utilities Commission.

I read these two statements in his blog post and come to a very different conclusions:

“(I)ndividuals and businesses make investments in response to those policies, and many come to believe that they have a right to see those policies continue indefinitely.”

Yes, the investor owned utilities and certain large scale renewable firms have come to believe that they have a right to see their subsidies continue indefinitely. California utilities are receiving subsidies amounting to $5 billion a year due to poor generation portfolio management. You can see this in your bill with the PCIA. This dwarfs the purported subsidy from rooftop solar. Why no call for reforming how we recover these costs from ratepayers and force shareholder to carry their burden? (And I’m not even bringing up the other big source of rate increases in excessive transmission and distribution investment.)

Why wasn’t there a similar cry against bailing out PG&E in not one but TWO bankruptcies? Both PG&E and SCE have clearly relied on the belief that they deserve subsidies to continue staying in business. (SCE has ridden along behind PG&E in both cases to gain the spoils.) The focus needs to be on ALL players here if these types of subsidies are to be called out.

“(T)he reactions have largely been about how much subsidy rooftop solar companies in California need in order to stay in business.”

We are monitoring two very different sets of media then. I see much more about the ability of consumers to maintain an ability to gain a modicum of energy independence from large monopolies that compel that those consumers buy their service with no viable escape. I also see a reactions about how this will undermine directly our ability to reduce GHG emissions. This directly conflicts with the CEC’s Title 24 building standards that use rooftop solar to achieve net zero energy and electrification in new homes.

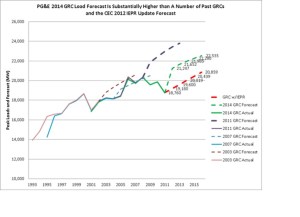

Along with the effort to kill CCAs, the apparent proposed solution is to concentrate all power procurement into the hands of three large utilities who haven’t demonstrated a particularly adroit ability at managing their portfolios. Why should we put all of our eggs into one (or three) baskets?

Borenstein continues to rely on an incorrect construct for cost savings created by rooftop solar that relies on short-run hourly wholesale market prices instead of the long-term costs of constructing new power plants, transmission rates derived from average embedded costs instead of full incremental costs and an assumption that distribution investment is not avoided by DER contrary to the methods used in the utilities’ own rate filings. He also appears to ignore the benefits of co-locating generation and storage locally–a set up that becomes much less financially viable if a customer adds storage but is still connected to the grid.

Yes, there are problems with the current compensation model for NEM customers, but we also need to recognize our commitments to customers who made investments believing they were doing the right thing. We need to acknowledge the savings that they created for all of us and the push they gave to lower technology costs. We need to recognize the full set of values that these customers provide and how the current electric market structure is too broken to properly compensate what we want customers to do next–to add more storage. Yet, the real first step is to start at the source of the problem–out of control utility costs that ratepayers are forced to bear entirely.