The California Water Commission staff asked a group of informed stakeholders and experts about “how to shape well-managed groundwater trading programs with appropriate safeguards for communities, ecosystems, and farms.” I submitted the following essay in response to a set of questions.

In general, setting up functioning and fair markets is a more complex process than many proponents envision. Due to the special characteristics of water that make location particularly important, water markets are likely to be even more complex, and this will require more thinking to address in a way that doesn’t stifle the power of markets.

Anticipation of Performance

- Market power is a concern in many markets. What opportunities or problems could market power create for overall market performance or for safeguarding? How is it likely to manifest in groundwater trading programs in California?

I was an expert witness on behalf of the California Parties in the FERC Energy Crisis proceeding in 2003 after the collapse of California’s electricity market in 2000-2001. That initial market arrangement failed for several reasons that included both exploitations of traits of internal market functions and limitations on outside transactions that enhanced market power. An important requirement that can mitigate market power is the ability to sign long-term agreements that then reduces the amount of resources that are open to market manipulation. Clear definitions of resource accounting used in transactions is a second important element. And lowering transaction costs and increasing liquidity are a third element. Note that confidentiality has not prevented market gaming in electricity markets.

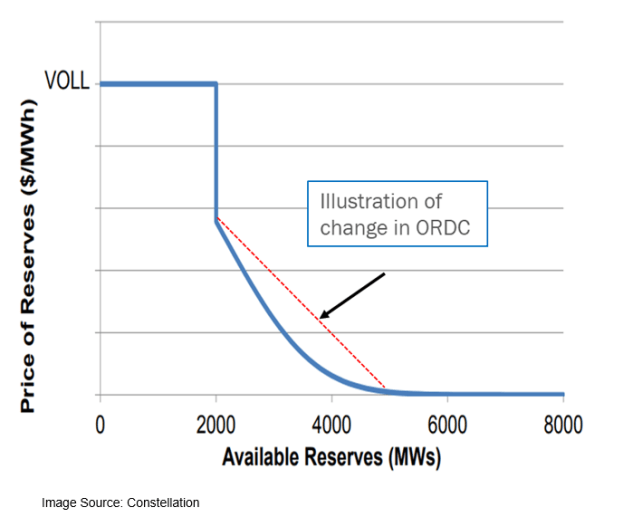

Groundwater provides a fairly frequent opportunity for exploitation of market power with recurrence of dry and drought conditions. The analogy for electricity is during peak load conditions. Prices in the Texas ERCOT market went up 30,000 fold last February during such a shortage. Droughts in California happen more frequently than freezes in Texas.

The other dimension is that often a GSA has a concentration of a small number of property owners. This small concentration eases the ability to manipulate prices even if buyers and sellers are anonymous. This situation is what led to the crisis in the CAISO market. (I was able beforehand to calculate the minimum generation capacity ownership required to profitably manipulate prices, and it was an amount held by many of the merchant generators in the market.) Those larger owners are also the ones most likely to have the resources to participate in certain types of market designs due to higher transaction costs that act as barriers.

2. Given a configuration of market rules, how well can impacts to communities, the environment, and small farmers be predicted?

The impacts can be fairly well assessed with sufficient modeling with inclusion of three important pieces of information. The first is a completely structured market design that can be tested and modeled. The second is a relatively accurate assessment of the costs of individuals entities to participate in such a market. And the third is modelling the variation in groundwater depth to assess the likelihood of those swings exceeding current well depths for these groups.

Safeguards

3. What rules are needed to safeguard these water users? If not through market mechanisms directly, how could or should these users be protected?

These groups should not participate in shorter term groundwater trading markets such as for annual allocations unless they proactively elect to do so. They are unlikely to have the resources to participate in an usefully informed way. Instead, the GSAs should carve allocations out of the sustainable yields that are then distributed in any number of methods that include bidding for long run allocations as well as direct allowances.

For tenant farmers, restrictions on landlords’ participation in short-term markets should be implemented. This can be specified either through quantity limits, long term contracting requirements or time windows for guaranteed supplies to tenants that match with lease terms.

4. What other kinds of oversight, monitoring, and evaluation of markets are needed to safeguard? Who should perform these functions?

These markets will likely require oversight to prevent market manipulation. Instituting market monitors akin to those who now oversee the CAISO electricity and the CARB GHG Allowance auctions is potential approach. The state would most likely be the appropriate institution to provide this service. The functions for those monitors are well delineated by those other agencies. The single most important requirement for this function is a clear authority and willingness to enforce meaningful actions as a consequence of violations.

5. Groundwater trading programs could impact markets for agricultural commodities, land, labor, or more. To what degree could the safeguards offered by groundwater trading programs be undermined through the programs’ interactions with other markets? How should other markets be considered?

These interactions among different markets are called pecuniary externalities, and economists consider these as intended consequences of using market mechanisms to change behavior and investments across markets. For example, establishing prices for groundwater most likely will change both cropping decisions and irrigation practices, which in turn will impact both equipment and service dealers and labor. Safeguards must be established in ways that do not directly affect these impacts—to do otherwise defeats the very purpose of setting up markets in the first place. People will be required to change from their current practices and choices as a result of instituting these markets.

Mitigation of adverse consequences should account for catastrophic social outcomes to individuals and businesses that are truly outside of their control. SGMA, and associated groundwater markets, are intended to create economic benefits for the larger community. A piece often missing from the social benefit-cost assessment that leads to the adoption of these programs is compensation to those who lose economically from the change. For example, conversion from a labor intensive crop to a less water intensive one could reduce farm labor demand. Those workers should be paid compensation from a public pool of beneficiaries.

6. Should safeguarding take common forms across all of the groundwater trading programs that may form in California? To the degree you think it would help, what level of detail should a common framework specify?

Localities generally do not have either the resources, expertise or sufficient incentives to manage these types of safeguards. Further the safeguards should be relatively uniform across the region to avoid creating inadvertent market manipulation opportunities among different groundwater markets. (That was one of the means of exploiting CAISO market in 2000-01.) The level of detail will depend on other factors that can be identified after potential market structures are developed and a deeper understanding is prepared.

7. Could transactions occurring outside of a basin or sub-basin’s groundwater trading program make it harder to safeguard? If so, what should be done to address this?

The most important consideration is the interconnection with surface water supplies and markets. Varying access to surface water will affect the relative ability to manipulate market supplies and prices. The emergence of the NASDAQ Veles water futures market presents another opportunity to game these markets.

Among the most notorious market manipulation techniques used by Enron during the Energy Crisis was one called “Ricochet” that involved sending a trade out of state and then returning down a different transmission line to create increased “congestion.” Natural gas market prices were also manipulated to impact electricity prices during the period. (Even the SCAQMD RECLAIM market may have been manipulated.) It is possible to imagine a similar series of trades among groundwater and surface water markets. It is not always possible to identify these types of opportunities and prepare mitigation until a full market design is prepared—they are particular to situations and general rules are not easily specified.

Performance Indicators and Adaptive Management

8. Some argue that market rules can be adjusted in response to evidence a market design did not safeguard. What should the rules for changing the rules be?

In general, changing the rules for short term markets, e.g., trading annual allocations, should be relatively easy. Investors should not be allowed to profit from market design flaws no matter how much they have spent. Changes must be carefully considered but they also should not be easily impeded by those who are exploiting those flaws, as was the case in fall of 2000 for California’s electricity market.