by Steven J. Moss and Richard J. McCann, M.Cubed

A potentially key barrier to decarbonizing California’s economy is escalating electricity costs.[1] To address this challenge, the Local Government Sustainable Energy Coalition, in collaboration with Santa Barbara Clean Energy, proposes to create a decarbonization incentive rate, which would enable customers who switch heating, ventilation and air conditioning (HVAC) or other appliances from natural gas, fossil methane, or propane to electricity to pay a discounted rate on the incremental electricity consumed.[2] The rate could also be offered to customers purchasing electric vehicles (EVs).

California has adopted electricity rate discounts previously to incentivize beneficial choices, such as retaining and expanding businesses in-state,[3] and converting agricultural pump engines from diesel to electricity to improve Central Valley air quality.[4]

- Economic development rates (EDR) offer a reduction to enterprises that are considering leaving, moving to or expanding in the state. The rate floor is calculated as the marginal cost of service for distribution and generation plus non-bypassable charges (NBC). For Southern California Edison, the current standard EDR discount is 12%; 30% in designated enhanced zones.[5]

- AG-ICE tariff, offered from 2006 to 2014, provided a discounted line extension cost and limited the associated rate escalation to 1.5% a year for 10 years to match forecasted diesel fuel prices.[6] The program led to the conversion of 2,000 pump engines in 2006-2007 with commensurate improvements in regional air quality and greenhouse gas (GHG) emission reductions.[7]

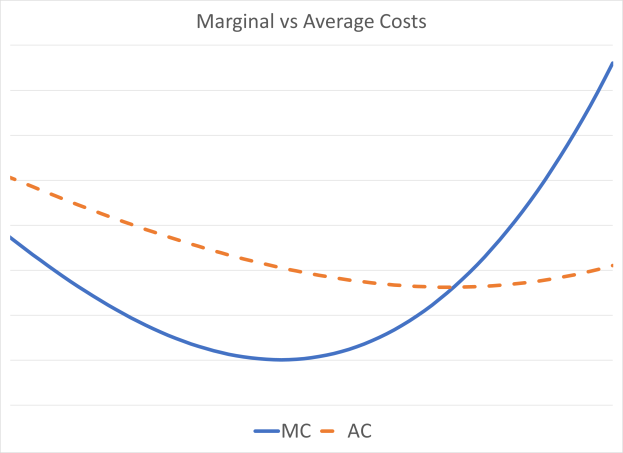

The decarbonization incentive rate (DIR) would use the same principles as the EDR tariff. Most importantly, load created by converting from fossil fuels is new load that has only been recently—if at all–included in electricity resource and grid planning. None of this load should incur legacy costs for past generation investments or procurement nor for past distribution costs. Most significantly, this principle means that these new loads would be exempt from the power cost indifference adjustment (PCIA) stranded asset charge to recover legacy generation costs.

The California Public Utility Commission (CPUC) also ruled in 2007 that NBCs such as for public purpose programs, CARE discount funding, Department of Water Resources Bonds, and nuclear decommissioning, must be recovered in full in discounted tariffs such as the EDR rate. This proposal follows that direction and include these charges, except the PCIA as discussed above.

Costs for incremental service are best represented by the marginal costs developed by the utilities and other parties either in their General Rate Case (GRC) Phase II cases or in the CPUC’s Avoided Cost Calculator. Since the EDR is developed using analysis from the GRC, the proposed DIR is illustrated here using SCE’s 2021 GRC Phase II information as a preliminary estimate of what such a rate might look like. A more detailed analysis likely will arrive at a somewhat different set of rates, but the relationships should be similar.

For SCE, the current average delivery rate that includes distribution, transmission and NBCs is 9.03 cents per kilowatt-hour (kWh). The average for residential customers is 12.58 cents. The system-wide marginal cost for distribution is 4.57 cents per kilowatt-hour;[8] 6.82 cents per kWh for residential customers. Including transmission and NBCs, the system average rate component would be 7.02 cents per kWh, or 22% less. The residential component would be 8.41 cents or 33% less.[9]

The generation component similarly would be discounted. SCE’s average bundled generation rate is 8.59 cents per kWh and 9.87 cents for residential customers. The rates derived using marginal costs is 5.93 cents for the system average and 6.81 cent for residential, or 31% less. For CCA customers, the PCIA would be waived on the incremental portion of the load. Each CCA would calculate its marginal generation cost as it sees fit.

For bundled customers, the average rate would go from 17.62 cents per kWh to 12.95 cents, or 26.5% less. Residential rates would decrease from 22.44 cents to 15.22 cents, or 32.2% less.

Incremental loads eligible for the discounted decarb rate would be calculated based on projected energy use for the appropriate application. For appliances and HVAC systems, Southern California Gas offers line extension allowances for installing gas services based on appliance-specific estimated consumption (e.g., water heating, cooking, space conditioning).[10] Data employed for those calculations could be converted to equivalent electricity use, with an incremental use credit on a ratepayer’s bill. An alternative approach to determine incremental electricity use would be to rely on the California Energy Commission’s Title 24 building efficiency and Title 20 appliance standard assumptions, adjusted by climate zone.[11]

For EVs, the credit would be based on the average annual vehicle miles traveled in a designated region (e.g., county, city or zip code) as calculated by the California Air Resources Board for use in its EMFAC air quality model or from the Bureau of Automotive Repair (BAR) Smog Check odometer records, and the average fleet fuel consumption converted to electricity. For a car traveling 12,000 miles per year that would equate to 4,150 kWh or 345 kWh per month.

[1] CPUC, “Affordability Phase 3 En Banc,” https://www.cpuc.ca.gov/industries-and-topics/electrical-energy/affordability, February 28-March 1, 2022.

[2] Remaining electricity use after accounting for incremental consumption would be charged at the current otherwise applicable tariff (OAT).

[3] California Public Utilities Commission, Decision 96-08-025. Subsequent decisions have renewed and modified the economic development rate (EDR) for the utilities individually and collectively.

[4] D.05-06-016, creating the AG-ICE tariff for Pacific Gas & Electric and Southern California Edison.

[5] SCE, Schedules EDR-E, EDR-A and EDR-R.

[6] PG&E, Schedule AG-ICE—Agricultural Internal Combustion Engine Conversion Incentive Rate.

[7] EDR and AG-ICE were approved by the Commission in separate utility applications. The mobile home park utility system conversion program was first initiated by a Western Mobile Home Association petition by and then converted into a rulemaking, with significant revenue requirement implications.

[8] Excluding transmission and NBCs.

[9] Tiered rates pose a significant barrier to electrification and would cause the effective discount to be greater than estimated herein. The estimates above were based on measuring against the average electricity rate but added demand would be charged at the much higher Tier 2 rate. The decarb allowance could be introduced at a new Tier 0 below the current Tier 1.

[10] SCG, Rule No. 20 Gas Main Extensions, https://tariff.socalgas.com/regulatory/tariffs/tm2/pdf/20.pdf, retrieved March 2022.

[11] See https://www.energy.ca.gov/programs-and-topics/programs/building-energy-efficiency-standards;

https://www.energy.ca.gov/rules-and-regulations/building-energy-efficiency/manufacturer-certification-building-equipment;https://www.energy.ca.gov/rules-and-regulations/appliance-efficiency-regulations-title-20